AI Statistics for Non-Majors

arigaram

Without a single formula or line of code, this penetrates the essence of basic statistics necessary for AI development and application.

Beginner

AI

We present ways to improve RAG performance based on Cognitive Load Theory. To overcome the limitations of existing RAG enhancement techniques, we synthesize various methods by applying the conceptual framework of cognitive load.

Strategies for understanding and managing LLM context window and token limits

How to generate high-quality chunks and integrate them into a RAG pipeline

The course is currently being completed. The downside is that you may have to wait a long time until the course is fully finished (although it will be supplemented frequently). Please take this into consideration when making your purchase decision.

April 22, 2026

We are undergoing a full revision to the 2nd edition before completing the 1st edition. Existing 1st edition sections will be deleted once their corresponding 2nd edition sections are completed.

September 4, 2025

I have uploaded about 2/3 of the integrated summaries for each section. I will upload the remaining integrated summaries one by one shortly.

Section 3 was previously split into Section 3 and Section 4, but since the section numbers in the lecture list and the study materials did not match, potentially causing confusion, I have moved Section 4 to Section 31 (at the very end).

September 1, 2025

Section 3 has been split into Section 3 and Section 4. As a result, the section numbers and the lesson material numbers may not match. I will correct the lesson materials, re-record the videos, and repost them. I would appreciate your patience.

We are reorganizing the table of contents to reduce confusion for students. Accordingly, the lessons that were temporarily set to private on August 22nd have been changed back to public.

August 22, 2025

The lessons belonging to the [Advanced] course (Sections 11 to 30), which are not yet completed, have been changed to private. We plan to release them by section or by lesson as they are completed in the future. We ask for your understanding as this is a measure to reduce confusion for students.

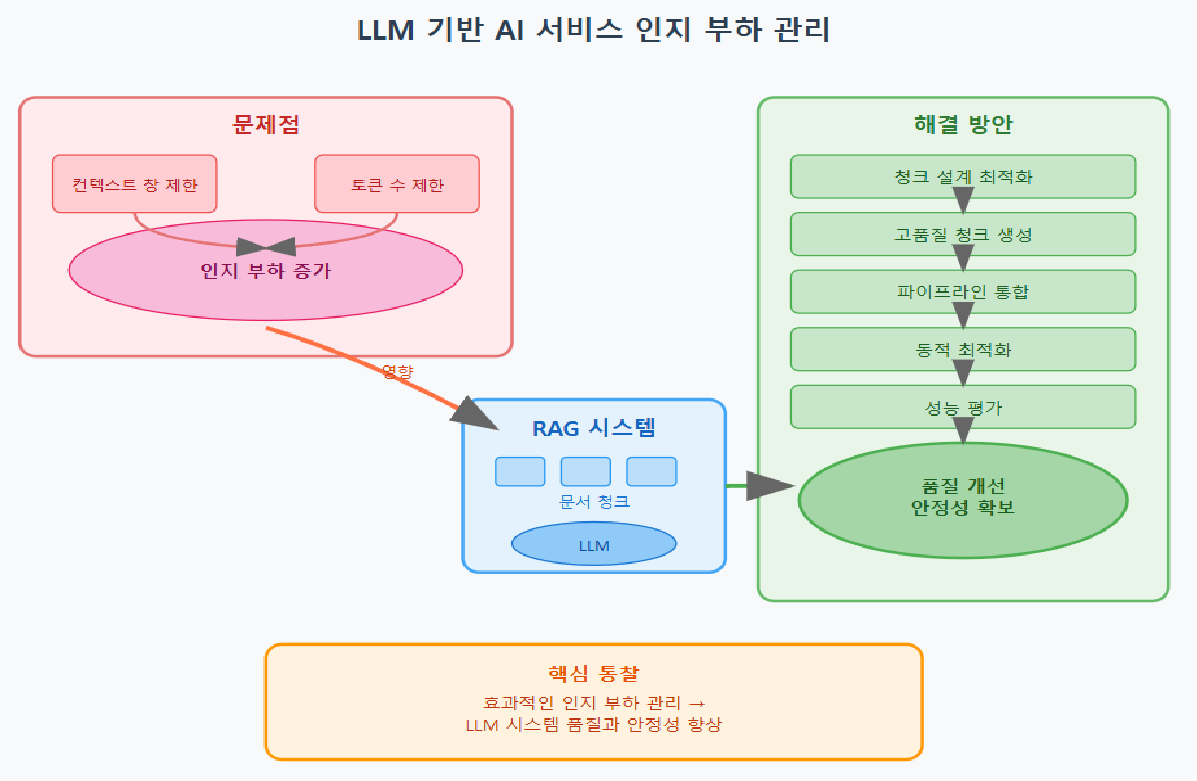

Building artificial intelligence services based on Large Language Models (LLMs) has become the mainstream approach, but limitations exist due to restricted context window sizes and token counts. In particular, within Retrieval-Augmented Generation (RAG) systems, failing to properly manage documents or chunks (fragments of documents) leads to cognitive load on the LLM side, making it difficult to generate optimal responses.

Cognitive load refers to the degree of difficulty in perceiving information based on the amount and complexity of information that a system (including both the human brain and artificial intelligence) must process. When cognitive load increases in an LLM system, information accumulates excessively, blurring the core message, degrading performance, and potentially failing to produce the expected level of response. Therefore, effective cognitive load management is a key factor that determines the quality and stability of LLM-based systems.

In this course, based on the concepts of LLM context window limits and cognitive load, we present step-by-step methodologies that can be directly applied to practical work, ranging from chunk design to high-quality chunk generation, RAG pipeline integration, dynamic optimization, and performance evaluation. Through this, we expect to significantly resolve response quality degradation issues that could not be addressed by various existing RAG augmentation techniques.

Strategies for managing cognitive load according to LLM context windows and token limits

Methods for generating high-quality chunks and ways to utilize various chunking techniques

Technology that integrates data preprocessing, retrieval, prompt design, and post-processing to build a RAG system

Real-time chunk size adjustment and summary parameter control through dynamic optimization

Application of performance evaluation metrics and methods for writing result reports

Artificial Intelligence (AI), ChatGPT, LLM, RAG, AI Transformation (AX)

In the first section, we clarify the overall overview and goals of this course and cover the basic concepts of LLM's context window and cognitive load management. In particular, we will gain a detailed understanding of what cognitive load is and why it is important in an LLM environment, while learning the basics of RAG. Based on theory, we will point out the core topics to be covered in the lecture and help you set a learning direction. Concepts are explained step-by-step so that even beginners can easily follow along, establishing a solid foundation for a natural transition to advanced topics later on.

This section provides an in-depth analysis of the LLM's context window and tokenization mechanisms. We will examine in detail what tokens are, how they are split, and how they affect model input, while explaining how context window size limitations impact model performance through various use cases. Additionally, you will learn how to calculate costs based on tokens to develop practical skills for real-world system design. Through this process, you will gain a systematic understanding of tokens and context, allowing you to intuitively grasp specific issues in managing cognitive load.

Effective chunk design is the core of RAG system quality. This section introduces various chunking strategies ranging from fixed-size chunks to paragraph-based, semantic clustering, and hierarchical structures, while providing an in-depth look at the pros, cons, and use cases of each method. Based on an understanding of how chunk size and structure impact cognitive load and context utilization, you will acquire practical know-how for designing the optimal chunking strategy for any given situation. Finally, through hands-on exercises, you will gain experience applying various chunking methods to organically connect theory and practice.

This section covers more advanced techniques for generating chunks suitable for reducing cognitive load and improving information quality. You will learn various techniques such as smart summarization, merging original text with summaries, embedding-based clustering, meta-tagging, and reflecting query intent, and practice how to combine each technique to create more efficient chunks. Through this, you will develop the capability to generate high-quality chunks that go beyond simple chunking methods to reflect the meaning of information and the intent of the questioner. This is a core strategy to help the LLM provide optimal answers even when dealing with complex documents.

This section covers the design and integration of RAG systems in earnest. It systematically addresses the entire RAG pipeline process, from preprocessing, similarity search and filtering, chunk restructuring and prompt design, to answer generation and post-processing, as well as hallucination checks and re-injection strategies. You will learn the know-how to focus on generating accurate answers while minimizing cognitive load at each stage through hands-on practice. It focuses on providing practical techniques and problem-solving methods that can be immediately applied in real-world environments.

This section covers how to dynamically adjust context load and chunk size according to the situation. It provides an in-depth introduction to automation and optimization strategies for intelligent system operation, ranging from question complexity evaluation, dynamic chunk size adjustment algorithms, and adaptive summary parameter tuning to context accumulation management in multi-turn conversations, and system monitoring and feedback loop design. Through this, you will acquire real-time management capabilities to maximize LLM performance while responding to changing demands and complexity.

This section covers various metrics and evaluation methodologies to objectively assess the effectiveness of RAG systems and chunking strategies. You will learn how to identify areas for improvement through multi-faceted system performance measurements, ranging from recall, precision, response latency, cost analysis, token usage efficiency, and user satisfaction to strategy validation via A/B testing. Based on the evaluation results, it provides insights for continuous performance tuning and enhancement, strengthening your data-driven decision-making capabilities.

We discuss the research challenges and future scalability potential that need to be addressed in the fields of RAG and LLM cognitive load management. It covers scenarios such as fully automated chunk optimization, long-term memory integration issues, building RAG systems based on large-scale multimedia documents, and methods for expanding RAG systems to process multimodal information. Through the latest research trends and practical application cases, it provides a clear understanding of future development directions and challenges.

In this section, we explain how to carry out a comprehensive project that integrates the theories and techniques learned so far to design, implement, tune, and evaluate an actual RAG system. You will be able to verify your practical capabilities by proceeding through all stages in order—from project topic selection to data collection and preprocessing, chunking strategy design, RAG system integration, performance evaluation, result report writing, and finally, the final presentation and code review. Based on what you learn here, developers will be able to conduct practical projects either in teams or individually, allowing them to fully internalize the content learned in this course.

Based on the concept of cognitive load, you will gain a clear understanding of LLM context windows and token limits, and acquire strategies to manage them.

By using various chunking and optimization techniques, you can efficiently split and summarize information to maximize LLM performance.

Through hands-on practice of the entire RAG pipeline process, you will acquire the ability to build and tune actual systems.

Through dynamic optimization and performance evaluation, you will learn how to provide stable and high-performance AI services in real-time production environments.

Through the latest research topics and expansion directions, you will understand the trends in AI system development and strengthen your future readiness.

Who is this course right for?

Developers who directly design or operate LLM and RAG systems

AI engineer interested in optimizing large-scale document processing and multi-turn conversation handling

Need to know before starting?

Understanding the basic concepts of Natural Language Processing (NLP)

Understanding the basic operating principles of Large Language Models (LLMs)

Concepts of Tokenization and Context Windows

Basic programming skills (Python language recommended)

(Optional) Experience using artificial intelligence and machine learning models, or experience conducting related projects.

696

Learners

38

Reviews

2

Answers

4.6

Rating

18

Courses

I am someone for whom IT is both a hobby and a profession.

I have a diverse background in writing, translation, consulting, development, and lecturing.

All

477 lectures ∙ (46hr 37min)

Course Materials:

Check out other courses by the instructor!

Explore other courses in the same field!