Hello, I am Heejin Cho, an AI Engineer and Full-Stack Developer. I focus on creating 'living services' that deliver real value to users, rather than just running models.

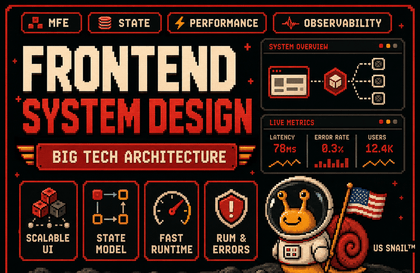

Practical Tech Stack: Based on Python (FastAPI, Django, LangChain) and JavaScript/TypeScript (React, Next.js), I design full-stack architectures that seamlessly connect complex AI logic with smooth user experiences.

Proven Expertise: I have achieved success in global technical competitions, including winning the NASA Space Apps Challenge and being selected as a national representative for the Hult Prize. I also possess the know-how gained from directly launching and operating real-world services, such as the real-time interview assistance service 'InterviewMate'.

In-depth Research: Going beyond simple application, I delve deep into the principles of the latest AI technologies by conducting research on prompt architecture and reasoning frameworks (STAR Framework), including publishing papers on arXiv.

"I teach code that works in the market, not just code for studying."

If you have felt frustrated by vague AI theories, come experience the problem-solving process of building actual products with me.

![[AI] Implementing Ideas with Prompts Only_Vibe Coding BasicsCourse Thumbnail](https://cdn.inflearn.com/public/files/courses/336725/cover/01jr2vbd3dr8hh1n4qn2jcyj02?w=420)

![[Inflearn Award Bestseller] How to Become an AI Automation Expert Without Coding, The Complete n8n GuideCourse Thumbnail](https://cdn.inflearn.com/public/files/courses/337053/cover/01jrkjt8emye2z7j7846br3vkk?w=420)

![Just 1 hour! Creating 'My Own AI Senior Developer' to install on my computer (Antigravity Vibe Coding) [Source code provided]Course Thumbnail](https://cdn.inflearn.com/public/files/courses/340332/cover/ai/3/e87ee52b-1099-42db-a384-64ab8c725470.png?w=420)