AI Statistics for Non-Majors

arigaram

$26.40

Beginner / AI

Without a single formula or line of code, this penetrates the essence of basic statistics necessary for AI development and application.

Beginner

AI

It explains in detail the various language models developed in the process, starting from the beginnings of natural language processing technology to the latest LLM models.

20 learners are taking this course

Level Beginner

Course period Unlimited

The development process of language models and the principles of each language model

Origins of NLP

The structure and principles of Transformers

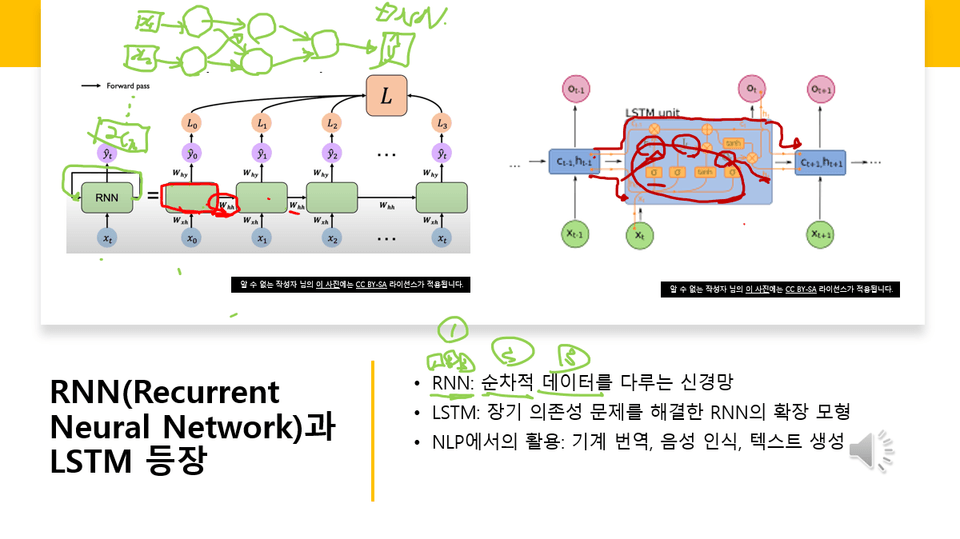

Structure and principles of RNN and LSTM

Principles of the Attention Mechanism

The course is currently being completed. The downside is that you may have to wait a long time until the lectures are fully finished (though they will be supplemented frequently). Please take this into consideration when making your purchase decision.

December 10, 2025

I have released the table of contents for now, as I plan to add a significant number of new lessons. These have been marked as [2nd Edition].

I have marked the existing lessons as [1st Edition]. I plan to revise the existing lessons. Once they are updated with the revised content, the lesson titles will be marked as [2nd Edition].

This course is a comprehensive learning journey through the evolution of language models, from early natural language processing research to the latest Large Language Models (LLMs). You will systematically understand the technological shifts starting from the rule-based era, through statistical language models, neural network-based models, and the Transformer revolution, leading up to today's multimodal, efficiency-focused, and application-oriented LLMs.

Understand the overall flow of how language models have evolved.

Identify the characteristics of key models from each era (RNN, LSTM, Transformer, BERT, GPT, etc.).

Structurally organize the latest LLM technologies and research trends.

Understand LLM efficiency techniques and their practical application methods.

Critically examine the future directions and limitations of LLM research.

The lecture consists of a total of 6 sections, with each section organized around chronological trends and research axes.

Section 1: Origins and Early Development of NLP

Section 2: Language Model Research Before Transformers

Section 3: The Transformer Revolution and Large Language Models

Section 4: Latest LLM Technologies and Research Trends

Section 5: LLM Efficiency Techniques and Model Optimization

Section 6: LLM Applications, System Integration, and Future Outlook

In this section, you will learn about the starting point of NLP and the foundations of early language models.

What problems NLP addresses and how it began

How rule-based systems were structured and why they encountered limitations

How statistical language models (n-gram LM) emerged

The emergence and significance of early large-scale corpora, such as the Brown Corpus and Penn Treebank

The concept of the Distributional Hypothesis and its application in NLP

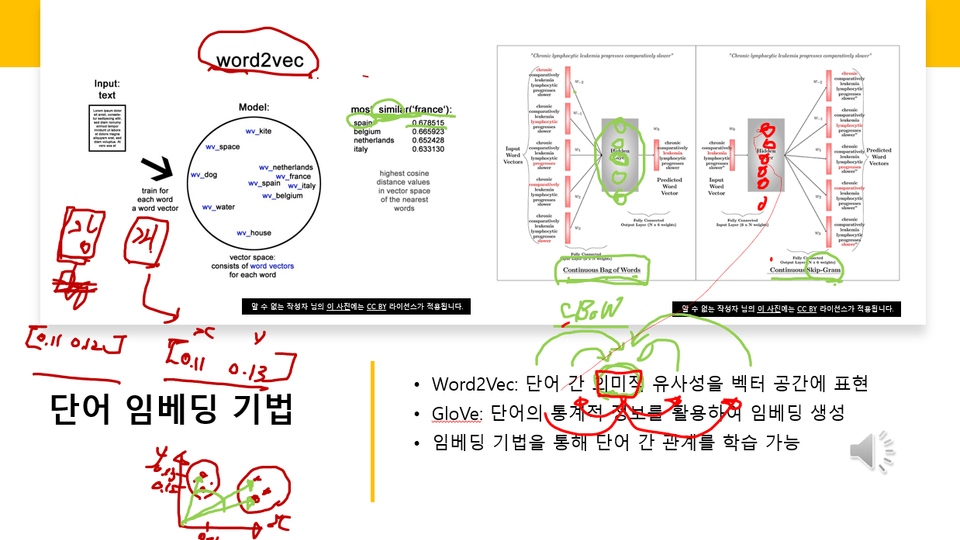

The birth and contributions of early word embedding technologies such as Word2Vec and GloVe

This section covers how RNN-based models transformed NLP and the technical limitations prior to Transformers.

The background of the emergence and structural principles of RNN, LSTM, and GRU

The essence of the long-term dependency problem

How the Seq2Seq architecture led the innovation in machine translation

The reason for the emergence of the Attention mechanism and its effects

CNN-based language models belong to a different research category, so it is uncertain, but the main ideas will be learned.

Understand the need for next-generation models by summarizing the research landscape immediately preceding the Transformer.

In this section, you will learn how the modern LLM era, centered around Transformers, began.

The structure and characteristics of the Transformer, represented by “Attention Is All You Need”

Background on the emergence of Pretraining and Language Understanding models

The concept of bidirectionality in the BERT model and the MLM (Masked LM) technique

The main evolutionary trends of the GPT series (GPT-1 to GPT-4)

Establishment of the standardized learning paradigm of “Pre-training → Fine-tuning”

The meaning of Scaling Laws and changes in LLM training strategies

This section covers not only the architecture, characteristics, and training methods of the latest LLMs, but also human feedback-based models.

Common characteristics of the latest LLMs, such as GPT-4, Llama, and Claude

The background behind the emergence of open-source LLMs (e.g., Llama, Mistral)

User-customized learning technologies such as RLHF, DPO, and Instruction Tuning

Structure and use cases of multimodal models

Research on bias, hallucinations, and safety, along with ethical considerations

This section focuses on technologies that make large-scale models lighter and faster.

Quantization, Pruning, Knowledge Distillation

PEFT (Parameter-Efficient Fine-Tuning) such as LoRA and Prefix Tuning

High-speed Attention algorithms such as FlashAttention

Inference cost reduction techniques

Concepts and technical challenges of on-device LLM

Efficiency optimization cases in actual service application

In this section, you will learn how LLMs are utilized in actual systems and services,

and we will conclude by summarizing future directions while acknowledging some uncertainties.

Structure and advantages of Retrieval-Augmented Generation (RAG)

Principles of tool-use based LLMs such as Toolformer and ReAct

Domain-specific LLMs for fields such as medicine, law, and coding

Expansion of multimodal models such as GPT-4V

Research on LLM-based autonomous systems (some parts "uncertain")

Future prospects and points of debate regarding LLMs (e.g., the possibility of AGI → “uncertain”)

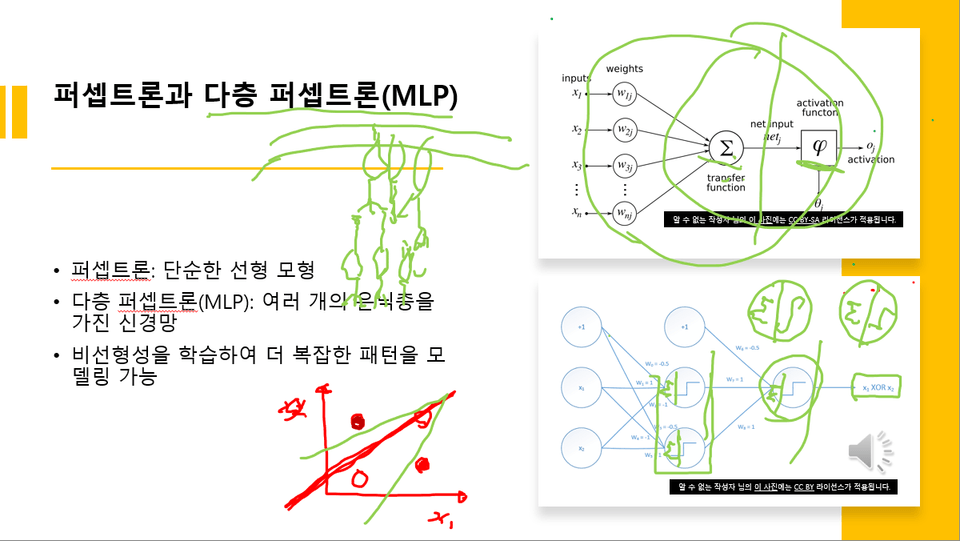

As shown in the example screen below, various diagrams are used during the lecture to explain LLM-related concepts in detail. In particular, intensive explanations are provided using diagrams related to NLP, RNN, self-attention, transformers, and LLMs.

Screen example 1 explained in Lesson 3

Screen example 2 explained in Lesson 3

Lecture Title

Screen example 3 explained in Lesson 3

Learners interested in AI and data science

Developers and researchers who want to systematically understand NLP or LLM technology

Those who want to grasp the latest trends in AI technology

Basic machine learning concepts

Experience using simple Python-based models (Recommended)

You can gain a deep understanding of the overall history of language model development.

You can acquire the foundational knowledge to analyze and utilize the latest LLM technologies and trends.

You can design problem-solving strategies, service architectures, and research directions using LLMs.

Since this is a theory-oriented lecture, a separate practice environment is not required.

The lecture notes are attached as a PDF file.

History and Evolution of LLMs: From the Origins of Language Models to the Latest Technologies

Who is this course right for?

Those who want to know the origins, development process, and technical trends of LLMs

Those who want to know the artificial neural network structure that serves as the foundation of LLMs

Those who want to build theoretical knowledge for developing LLMs themselves

695

Learners

38

Reviews

2

Answers

4.6

Rating

18

Courses

I am someone for whom IT is both a hobby and a profession.

I have a diverse background in writing, translation, consulting, development, and lecturing.

All

72 lectures ∙ (10hr 39min)

Course Materials:

Check out other courses by the instructor!

Explore other courses in the same field!

$254.10