Learning OpenAI Codex through Projects - From Basics to Advanced Vibe Coding Using AI

AISchool

Non-Majors Welcome: Real-World Vibe Coding Projects Created Through Conversations with AI

Beginner

Business Productivity, openai, codex

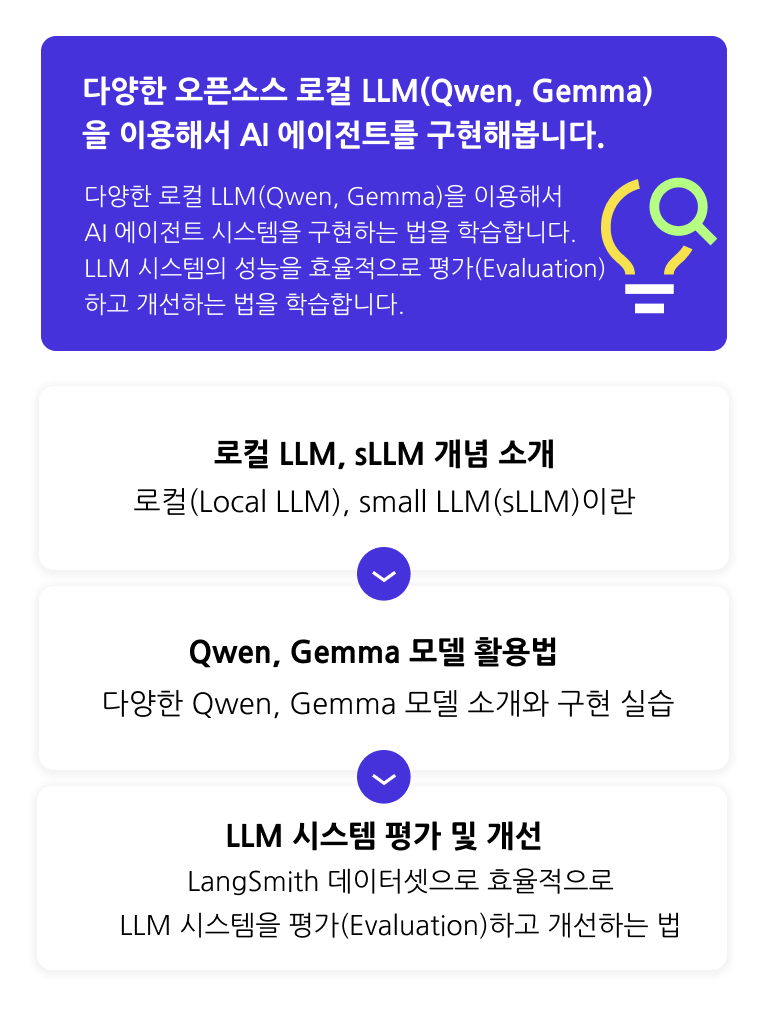

Learn how to utilize various local LLMs (Qwen, Gemma) and explore different techniques to efficiently evaluate and improve the performance of LLM systems.

34 learners

Level Intermediate

Course period Unlimited

How to implement an AI agent using local LLMs (Qwen, Gemma)

How to Evaluate and Improve the Performance of LLM Systems

How to Implement High-Quality AI Agents

Through hands-on practice, you'll implement various AI agents using Local LLMs.

How to efficiently utilize local LLMs (Qwen, Gemma)

How to effectively evaluate and improve the performance of LLM systems

Build AI agents using local LLMs (Qwen, Gemma)

Those who want to build one

Those who want to implement AI agents with local LLMs rather than OpenAI API

LLM system implementation skills

Those who want to improve their skills

Those who want to learn how to efficiently evaluate and improve LLM performance

the latest AI trends

Those who don't want to miss out

Those who want to follow up on the latest LLM models and stay current with the latest AI trends

👋 This course requires prerequisite knowledge of Python, Natural Language Processing (NLP), LLM, LangChain, and LangGraph. Please make sure to take the courses below first or have equivalent knowledge before taking this course.

Large Language Models (LLM) for Everyone Part 5 - Building Your Own AI Agent with LangGraph

Q. What is a Local LLM?

Local LLM refers to "a large language model (LLM) that runs directly on your PC/server (local environment)". In other words, instead of sending requests to the cloud (remote server) like OpenAI API, it's a form where you run the model on your computer's CPU/GPU to perform text generation, summarization, translation, coding assistance, etc. Representative local LLM models include Qwen and Gemma models.

Q. What are the advantages of using a local LLM?

The advantages of using open-source local LLMs are as follows.

Data Control/Privacy

Sensitive data (internal documents, customer information) can be processed on-premises/in-house VPC without sending it to external APIs.

Advantageous for security audits/regulatory compliance (finance, healthcare, etc.).

Can make cost structure 'predictable'

Instead of "per-token billing" like APIs, costs can be fixed as GPU/server expenses (tends to become more advantageous with higher traffic).

You can directly reduce unit costs through caching/batching/quantization.

Easy customization (tuning/domain specialization)

LoRA/QLoRA, DPO, RAG tuning, system prompt fixing, etc. enable task domain-specific optimization.

Good for raising output format (e.g., strict JSON), terminology/tone/rule compliance rates to production-level standards.

Reduced Vendor Lock-in

Less vulnerable to specific company policy changes, price increases, model discontinuation, or rate limit changes.

You can switch to a different model anytime if needed.

Transparency/Debugging Benefits

Weight/architecture information is often publicly available, making it possible to diagnose performance issues more systematically.

Easy to conduct safety/bias testing based on internal standards.

Q. Is prior knowledge required?

This [Local LLM Utilization Guide Part 1 - Utilizing small LLM(sLLM) & Evaluating and Improving LLM Performance] course covers LangChain, practical projects implementing AI agents using LangGraph library and LLM. Therefore, the course proceeds on the assumption that you have basic knowledge of Python, natural language processing, LLM, LangChain, and LangGraph. If you lack prerequisite knowledge, please be sure to take the prerequisite course [Large Language Models LLM for Everyone Part 5 - Building Your Own AI Agent with LangGraph] first.] trước.

Who is this course right for?

For those who want to learn how to utilize Local LLM

People who want to create AI agents with local LLMs (Qwen, Gemma)

Need to know before starting?

Python usage experience

Experience taking the prerequisite course [Large Language Models LLM for Everyone Part 5 - Building Your Own AI Agent with LangGraph]

10,106

Learners

814

Reviews

361

Answers

4.6

Rating

32

Courses

All

45 lectures ∙ (8hr 40min)

All

2 reviews

Check out other courses by the instructor!

Explore other courses in the same field!