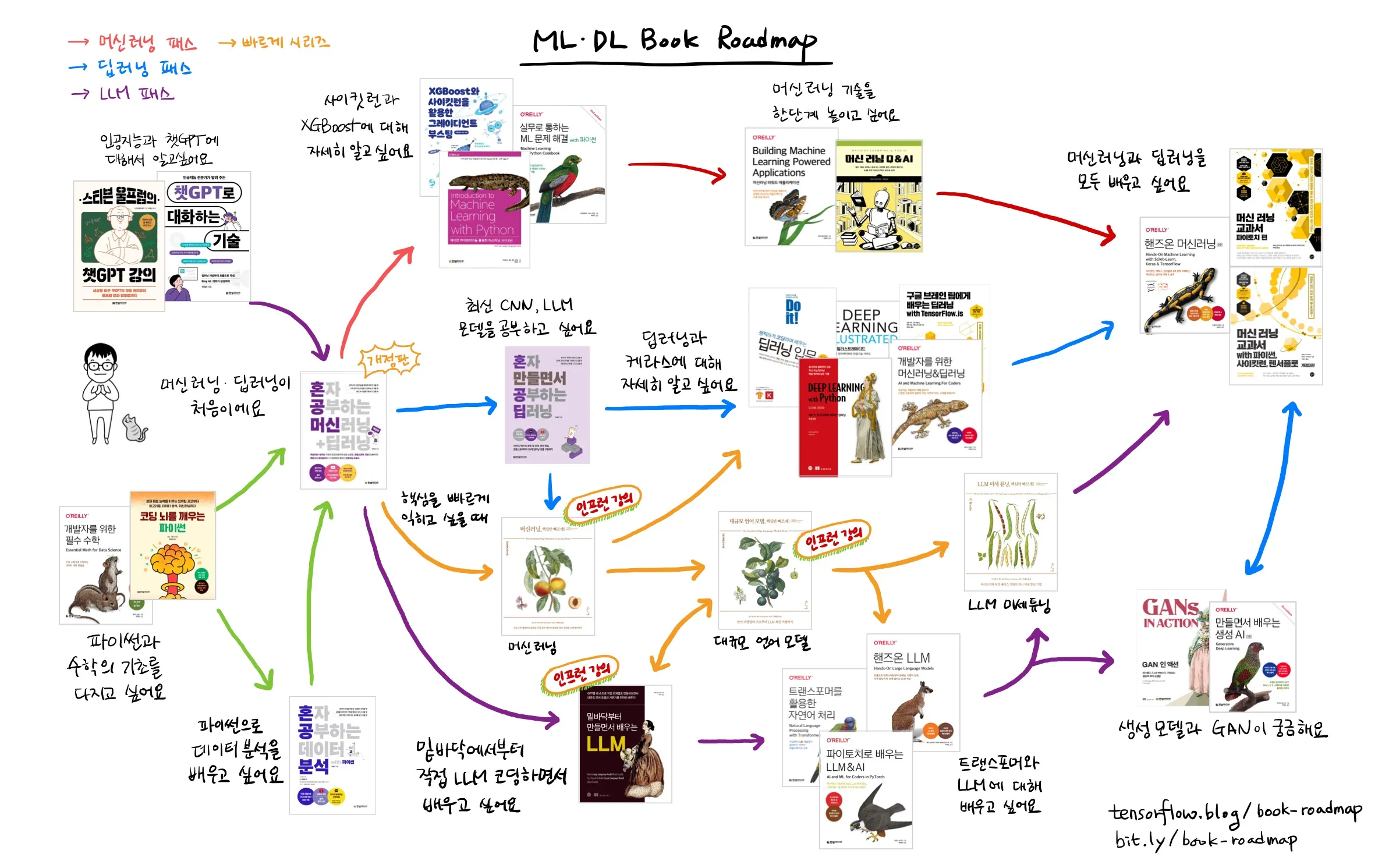

I majored in mechanical engineering, but since graduation, I have been consistently reading and writing code. I am a Google AI/Cloud GDE and a Microsoft AI MVP. I run the TensorFlow blog (tensorflow.blog) and enjoy exploring the boundary between software and science by writing and translating books on machine learning and deep learning.

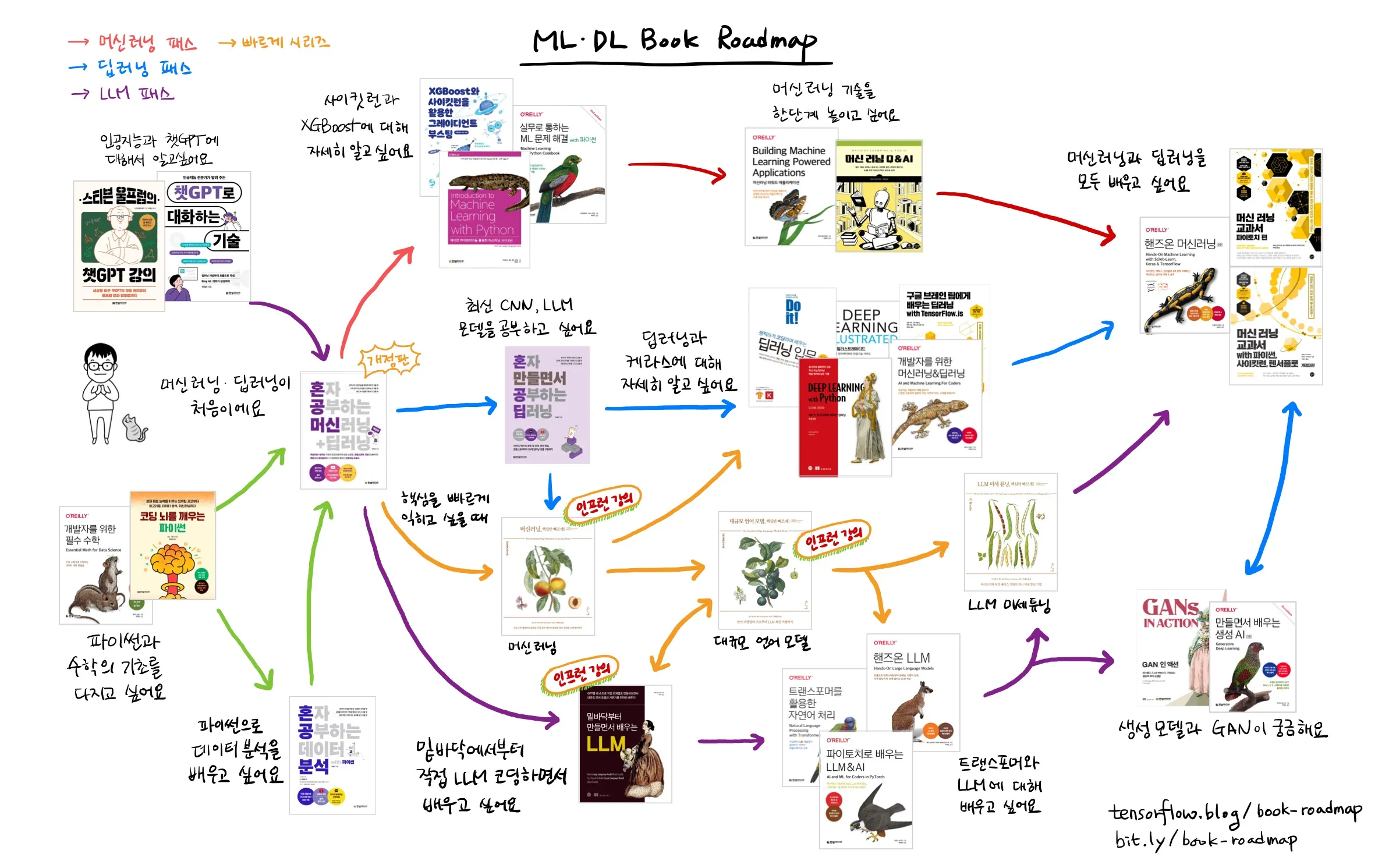

He has authored "Deep Learning by Building Alone" (Hanbit Media, 2025), "Machine Learning + Deep Learning Alone (Revised Edition)" (Hanbit Media, 2025), "Data Analysis with Python Alone" (Hanbit Media, 2023), "The Art of Conversing with ChatGPT" (Hanbit Media, 2023), and "Do it! Introduction to Deep Learning" (EasysPublishing, 2019).

He has translated dozens of books into Korean, including "LLM Fine-Tuning: Quick Core Concepts!" (Insight, 2026), "Learning LLM & AI with PyTorch" (Hanbit Media, 2026), "Large Language Models: Quick Core Concepts!" (Insight, 2025), "Machine Learning: Quick Core Concepts!" (Insight, 2025), "Learning LLM by Building from Scratch" (Gilbut, 2025), "Hands-On LLM" (Hanbit Media, 2025), "Machine Learning Q & AI" (Gilbut, 2025), "Mathematics for Developers" (Hanbit Media, 2024), "Practical ML Problem Solving with Python" (Hanbit Media, 2024), "Machine Learning Textbook: PyTorch Edition" (Gilbut, 2023), "Stephen Wolfram's ChatGPT Lecture" (Hanbit Media, 2023), "Hands-On Machine Learning, 3rd Edition" (Hanbit Media, 2023), "Generative Deep Learning, 2nd Edition" (Hanbit Media, 2023), "Python for Awakening the Coding Brain" (Hanbit Media, 2023), "Natural Language Processing with Transformers" (Hanbit Media, 2022), "Deep Learning with Python, 2nd Edition" (Gilbut, 2022), "Machine Learning & Deep Learning for Developers" (Hanbit Media, 2022), "Gradient Boosting with XGBoost and Scikit-Learn" (Hanbit Media, 2022), "Deep Learning with TensorFlow.js" (Gilbut, 2022), and "Introduction to Machine Learning with Python, 2nd Edition" (Hanbit Media, 2022).