Lee JinKyu | Lee JinKyu

AI·LLM·Big Data Analysis Expert / CEO of Happy AI

👉You can check the detailed profile at the link below.

https://bit.ly/jinkyu-profile

Hello.

I am Lee JinKyu (Ph.D. in Engineering, Artificial Intelligence), CEO of Happy AI, who has consistently handled AI and big data analysis in R&D, education, and project sites.

I have analyzed various types of unstructured data, such as

surveys, documents, reviews, media, policies, and academic data,

based on Natural Language Processing (NLP) and text mining.

Recently, I have been delivering practical AI application methods tailored to organizations and work environments

using Generative AI and Large Language Models (LLM).

We have collaborated with numerous public institutions, corporations, and educational organizations such as Samsung Electronics, Seoul National University, the Office of Education, Gyeonggi Research Institute, the Korea Forest Service,

the Korea National Park Service, and the Seoul Metropolitan Government,

and have conducted more than 200 research and analysis projects across various domains including healthcare, commerce, ecology, law, economics, and culture.

🎒 Inquiries for Lectures and Outsourcing

※ Kmong Prime Expert (Top 2%)

📘 Bio (Summary)

2024.07 ~ Present

CEO of Happy AI, a company specializing in Generative AI and Big Data analysis

Ph.D. in Engineering (Artificial Intelligence)

Dongguk University Graduate School of AI

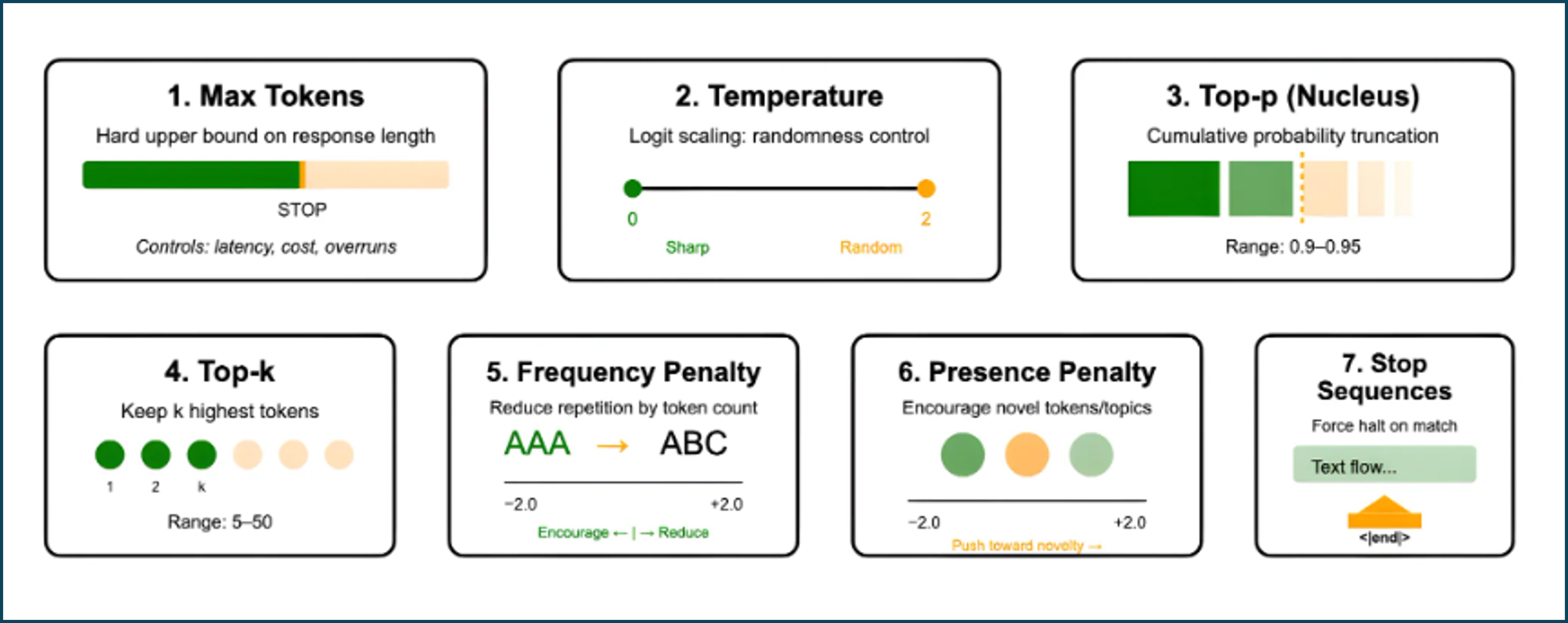

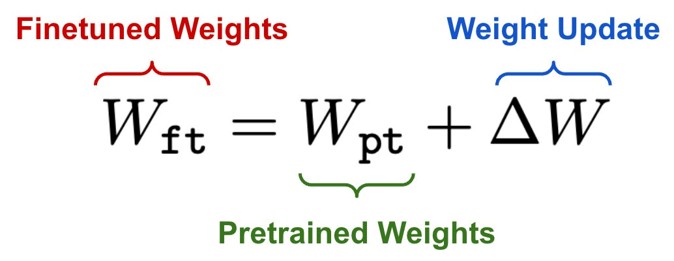

Detailed Major: Large Language Models (LLM)

(2022.03 ~ 2026.02)

2023 ~ 2025

Public News AI Columnist

(Generative AI Bias, RAG, LLM Application Issues)

2021 ~ 2023

AI & Big Data specialized company Stellavision Developer

2018 ~ 2021

Government-funded Research Institute Natural Language Processing & Big Data Analysis Researcher

🔹 Areas of Expertise (Lecture & Project Focused)

Generative AI and LLM Utilization

AI-based Big Data Analysis

Survey, review, media, policy, and academic data

Natural Language Processing (NLP) · Text Mining

Public and Corporate AI Task Automation

🎒 Courses & Activities (Selected)

2025

2024

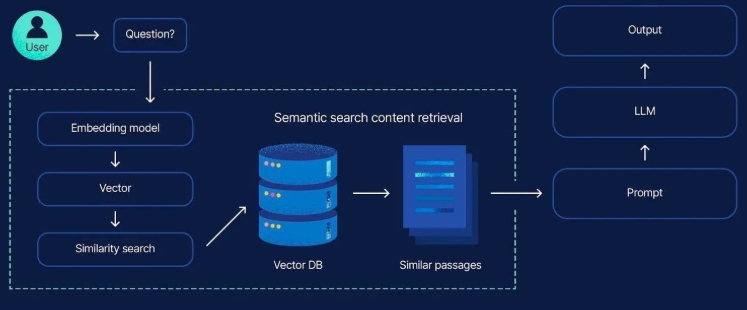

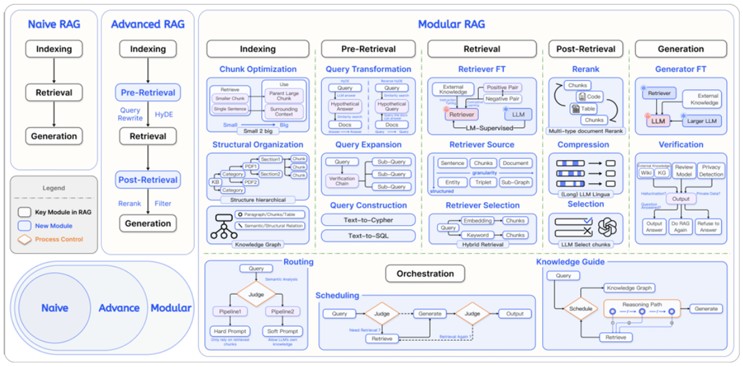

LangChain·RAG-based LLM Programming – Samsung SDS

LLM Theory and RAG Chatbot Development Practice – Seoul Digital Foundation

Introduction to ChatGPT-based Big Data Analysis – LetUin Edu

AI Fundamentals & Prompt Engineering Techniques – Korea Vocational Development Institute

LDA & Sentiment Analysis with ChatGPT – Inflearn

Python-based Text Analysis – Seoul National University of Science and Technology

Building LLM Chatbots Using LangChain – Inflearn

2023

Python Basics using ChatGPT – Kyonggi University

Big Data Expert Course Special Lecture – Dankook University

Fundamentals of Big Data Analysis – LetUin Edu

💻 Projects (Summary)

Building a Private LLM-based RAG Chatbot (Korea Electric Power Corporation)

LLM-based Forest Restoration Big Data Analysis (National Institute of Forest Science)

Internal Network Private LLM Text Mining Solution (Government Agency)

LLM Model Development based on Instruction Tuning and RLHF

Healthcare, Law, Policy, and Education Data Analysis

AI Analysis of Survey, Review, and Media Data

→ Performed over 200 cases, including public institutions, corporations, and research institutes

📖 Publication (Selected)

Improving Commonsense Bias Classification by Mitigating the Influence of Demographic Terms (2024)

Improving Generation of Sentiment Commonsense by Bias Mitigation

– International Conference on Big Data and Smart Computing (2023)

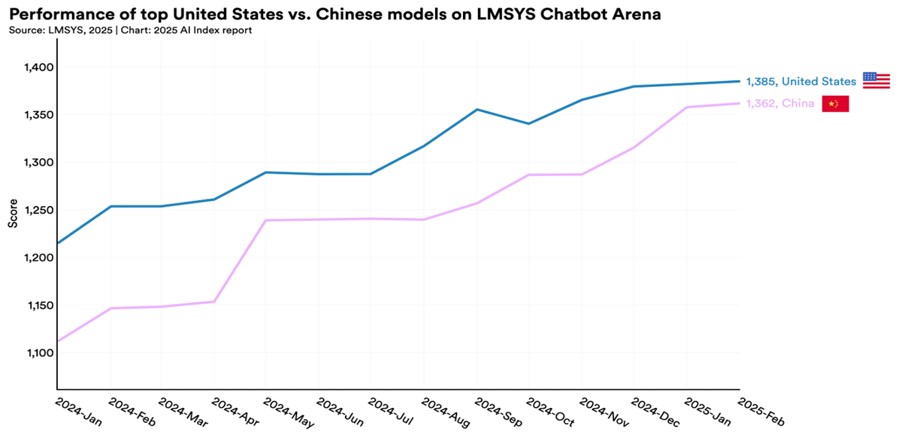

Analysis of Perceptions of LLM Technology Based on News Article Big Data (2024)

Numerous NLP-based text mining studies

(Forestry, Environment, Society, and Healthcare sectors)

🔹 Others

Python-based data analysis and visualization

Data analysis using LLM

Improving work productivity using ChatGPT, LangChain, and Agents

![[Free] TEXTOM 24 New Version Basic Course: SNS Perception Analysis for Big Data Basic Analysis Paper WritingCourse Thumbnail](https://cdn.inflearn.com/public/courses/331446/cover/90f3205d-1a4e-487b-ace0-350b844a12f6/banner1.png?w=420)

![[Free] Basic Text Mining: App Review Analysis with Python (40-minute completion)Course Thumbnail](https://cdn.inflearn.com/public/courses/331163/cover/74cc657a-a8f9-4a78-8edb-0d5fcd4c4c75/331163.png?w=420)

![[Practical] TEXTOM Practical Lecture: Text Analysis/Text Mining for Big Data Thesis WritingCourse Thumbnail](https://cdn.inflearn.com/public/courses/330219/cover/222b8ffa-fe15-4636-b10b-01cfd19a4114/그림1_크기줄임.png?w=420)

![[AI Survival Guide] How to survive without competing with AICourse Thumbnail](https://cdn.inflearn.com/public/files/courses/340653/cover/ai/1/fdd3be47-1f8d-4850-8a81-c266158dfe84.png?w=420)