[Side Project After Work] Big Data Analysis Certification Practical Exam (Type 1, 2, 3)

We guide non-majors and beginners to quickly obtain the Big Data Analysis Certification (Practical Exam)! Keep the theory light and the practice solid—focusing on core points that are guaranteed to appear on the exam through past questions, without the need for complex background knowledge.

5,315 learners

Level Beginner

Course period 12 months

News

80 articles

The challenge is scheduled to open sometime next week.

(The approval schedule from Inflearn has not been finalized yet, so the opening announcement may be sent out suddenly.)If you are currently taking the course, please wait a moment before applying!

We plan to issue and share a separate discount coupon.Thank you 😊

Practice problems for Task Type 1 have been updated. 🥰

If you haven't started studying yet, please use the new version for your studies.

The existing (old) versions will be deleted sequentially.

However, considering the students currently enrolled, we will keep them at the bottom until May before deleting them.New Added Lecture List

List of lectures scheduled for deletion

Please note that updates will be ongoing until May, so the order may change.

Thank you.

I'm rooting for you to pass this exam.

The 2026 latest revised edition has been published.

If you don't have the book yet, feel free to participate in the event! (10 copies giveaway)

https://youtube.com/shorts/EVDZYsDurOI?si=hYk02shY_tOHbu39

Even if you already have the existing book, there is no problem at all if you are currently taking the course.

I will update everything with the latest content!

And I will also soon prepare and announce the "Squid Game" challenge to help you get ready for the 12th session!

Thank you.

The final results for the 11th Big Data Analytics Engineer Practical Exam have been announced!

Congratulations to those who passed. If you received disappointing results, let's use this experience as a stepping stone and join us again next year with the determination to grow even more!!

I will also reflect on this exam content and the feedback you've provided, and come back next year with an even more updated course. 💪💪💪

And

I'm a bit embarrassed, but thanks to all of you, I received an award at the Inflearn Awards yesterday! Thank you so much :)

Wrap up the year well and have a happy Christmas and New Year! 🙇🏼♂️🙇🏼♂️🙇🏼♂️

We'll have to see how it turns out, but I've organized it with the 11th exam video.

Congratulations to everyone who took the Big Data Analytics Engineer exam - great job! 😊

Excluding ttest and sensitivity

How did you find it compared to previous exams? I've heard opinions that it was similar to past questions and relatively manageable, but I'm curious about your experience! 🤔

Why is

equal_var=Truewhen the problem doesn't mention equal variance?

Thank you to Song** for your question.In the Type 3 Work - Subproblem 3 of the practice problem,

the term "equal variance" does not directly appear in the problem text.However, in the solution, it is as follows:

#3 from scipy import stats result = stats.ttest_ind(df[cond1]['Resistin'], df[cond2]['Resistin'], equal_var = True) print(round(result.pvalue,3))I used the equal variance assumption (Student's t-test).

The reasons are as follows.The problem was a typical three-stage testing problem structured with the following flow.

# Checking Variance Differences Between Two Groups with F-test

Calculating the Pooled Variance Estimator

Perform independent samples t-test using the pooled variance

The very statement of calculating pooled variance already presupposes the assumption that the variances of the two groups are equal.

Therefore, I approached the solution using

equal_var=True.

Additionally,Single-sample t-test: Equal variance test not required (no two groups to compare)

Paired t-test: Equal variance test not required (uses only difference values)

Independent Samples t-test: Considering Equal Variance Test

Tomorrow is the Big Data Analytics Engineer exam.

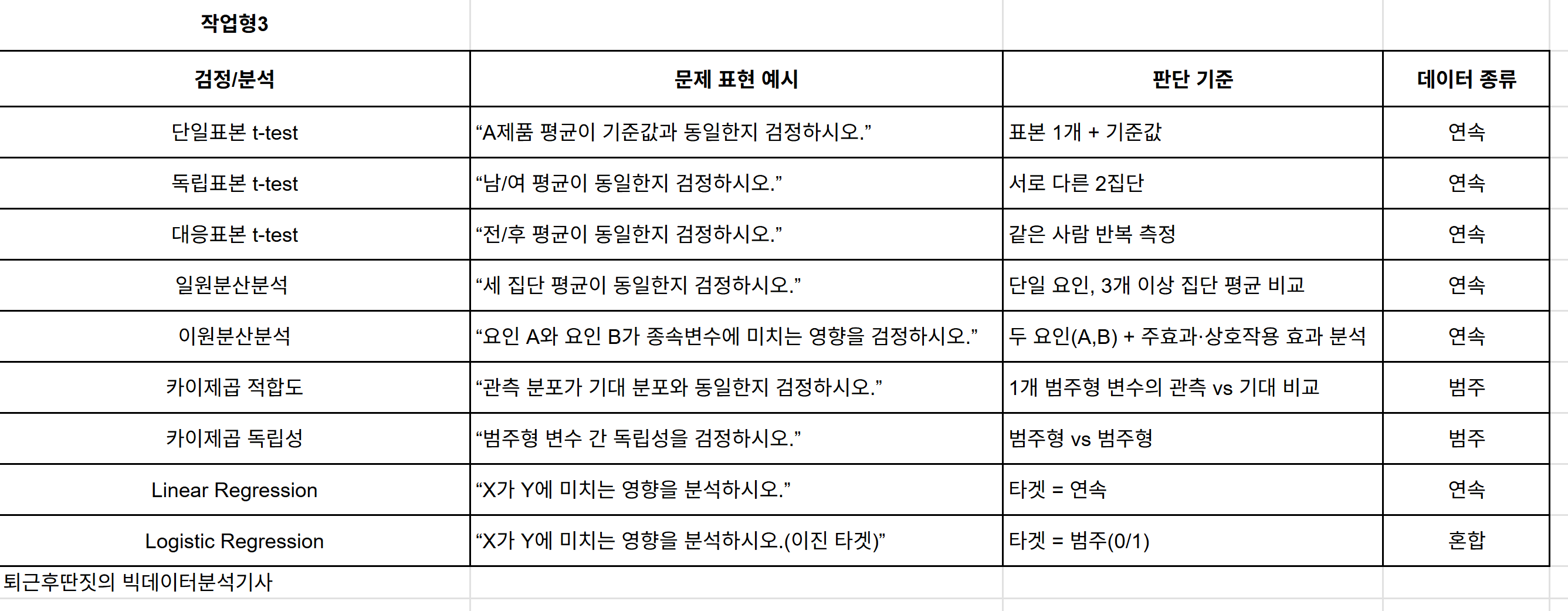

I wish you well on your exam, and I've organized examples of problem expressions for the practical type 3 questions.

Good luck on your exam 👏👏

Example Problem Type Learning

- Non-parametric methods are excluded due to low priority